Table of Contents

Introduction

You may have heard that ‘computers are faster, more efficient and more accurate than humans’, and thus we should rely on them to make significant decisions in the real-world. Humans have been, and will always be, somewhat judgemental and erring, howbeit, it must not be assumed that algorithms are the best solution to this.

Definitions for a couple of key terms used in this article:

- An algorithm is formula and set of instructions to solve a problem

- Bias is the tendency to be in favour of a certain idea, where the method to reach this outcome has been unfair (in science we call this a systematic error)

It may be hard to believe that algorithms can produce unfair and ‘biased’ results. Algorithms are developed by humans for a particular purpose, yet the essential concept to understand is that algorithms use machine learning to learn and enhance themselves over time.

Machine learning, which is a fundamental type of Artificial Intelligence, is the method of using historical data as an input to an algorithm, which enables it to become more accurate at predicting outcomes, without the need of a human to specifically program it to do so. Simply put, this means that the algorithm studies through hundreds and thousands of historical data in order to effectively train itself to output the end result.

In this way, as humans introduce an algorithm to the world wide web, the internet’s vast databases of data will allow the algorithm will learn & train itself to output predictions and results based on any patterns or trends that it spots (somewhat like a student studying for an exam by rummaging through hundreds of textbooks at once!)

Biased Training Data

At first, this method of training the algorithm seems fairly straightforward and uncomplicated; yet the problem arises due to the actual data that is stored on the internet.

One factor that could introduce algorithmic bias (but most certainly not the only factor) is that there could be pre-existing social bias on the dataset. This means that there could be data which is more heavily represented for a particular dataset, which leads to a skewed end-result, because the algorithm can only ‘learn’ from its ‘training’ data.

If it is unable to collate and study unbiased and unprejudiced data, then inevitably, the algorithm will output these biased and prejudiced judgements.

Algorithmic Bias – step-by-step example

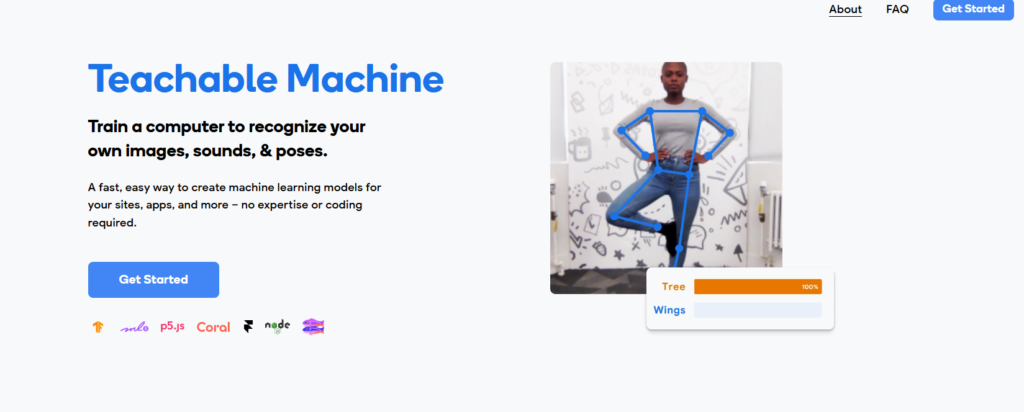

In order to show algorithmic bias in action, I will be using Google’s fantastic Teachable Machine software, where I will train my own machine learning model to learn about a specific dataset and then predict an outcome (after being ‘trained‘).

In this example, I will be training the computer to recognise the difference between a Lamborghini and a Range Rover.

Step I

Go to https://teachablemachine.withgoogle.com/ and open a new image project

Step II

Change the class name labels from “Class 1” and “Class 2” to the particular categories (in this case, we will use “Lamborghini” and “Range Rover”. Click on the pen icon to the right of the text to edit the label.

Step III

There are two ways to upload images into the model, so that it can train using this data (ensure that you have enough data to give to the model)

- Click on the webcam icon and then show your objects in front of the webcam

- Click on Upload, and browse for images on your local desktop.

I will choose the latter option (as I do not have multiple Lamborghini’s and Range Rover’s at my disposal 😅)

After selecting the data to upload for each Class (e.g. images for “Lamborghini” and for “Range Rover”), now select the button Train Model. This allows the computer to recognise the various images of the Lamborghinis and Range Rovers, so that we can predict the outcome of a random input in the next step.

It may take a few seconds for the model to train – ensure that you do not switch tabs while doing this, mainly because the entire process is happening right in the browser (it has not been uploaded to a cloud service)

Step IV

Once the Model has been trained, we can now experiment with different inputs and see if the model works! Find new images with test data (different to the images you uploaded to train the model).

Ensure that the Input toggle is switched to “ON” and that the dropdown is on “File” (not “Webcam”). Now we can choose an image from our files and see whether the model recognises it as a Lamborghini or a Range Rover.

Algorithmic Bias – EXPLANATION

In the example above, once we added the image samples to each class and we trained the model, the computer was able to continuously test the images and see a pattern with the outcomes. The aim of this example was to hope that the model could take any input (e.g. an image of a Lamborghini or Range rover) and give us an output as to what make of car it is, without being programmed by a human.

However, in the video above, you will see that when we input an image of a Range Rover, it recognises it as a Lamborghini, or it is unsure of which one to select. But out of the all of the test data we used, the majority were classified as “Lamborghini” – although we had 4 images of Range Rovers.

Why is that? This is algorithmic bias in action.

Cause of the bias

In our example above, we added 15 image samples for the Lamborghinis, but only 2 image samples for the Range Rovers. This meant that the model could only train itself on the data which we gave it – and thus, it gave us skewed outcomes with the test data.

Ultimately, this is how the algorithmic bias comes into action: the training data given to a model is not a full, fair representation of all possible test data – which means that the model is influenced in a certain way to give unfair outputs. (see below for the solution to this algorithmic bias)

In real-life situations (which have consequences much more severe than those we have just tested), this can be very dangerous. When asking a model to ‘crawl’ through millions and millions of pieces of data on the Internet (specifically what humans have once inputted onto the world wide web), there is going to be a pre-existing bias already on the Internet.

Thus, when the model trains and studies this vast amount of data – and it is tested – it will give outputs in the favour of the heavily weighted dataset. This is unfair because the programmer has inadvertently given the model heavily unbalanced pieces of training data.

Example of Algorithmic Bias in real-life

There are a plethora of examples where algorithmic bias has played a detrimental role in the actions of society.

The international company Amazon were trying to make their job application process much more efficient and coherent by introducing a résumé-screening algorithm which would study the thousands and thousands of résumés of its current employees over a 10-year period, and subsequently use this data to make a judgement as to whether a new candidate would be suited for the role at Amazon.

All companies were eagerly searching for this AI online recruiting engine, because it would save them a lot of time, and reduce human judgement and bias!

Yet, Amazon had not created, as it were, the best of softwares…

The training data introduced a great deal of sexism into the job application process, because the majority of current Amazon employees were men – so that inevitably meant that the majority of the training data can from men’s résumés. After studying all of the résumés of the current employees over a 10-year period, the algorithm concluded that previous unsuccessful candidates were those who had the word “woman’s” in their résumés (such as the “captain of a woman’s cricket team” or an “student at an all-woman’s university” et cetera).

This led to the algorithm automatically observing patterns that Amazon prefers to hire male candidates over female candidates; ergo, learning that it must also discriminate and show bias against women by not offering them the job at Amazon.

Of course this was clearly unacceptable and Amazon’s artificial intelligence specialists discovered that their own recruiting model was biased against women.

(There are many more examples of Algorithm Bias in real-life, such as racial bias, cultural bias et cetera.)

Thus, this allows us to discuss the significant question:

Is AI truly intelligent?

I believe that with our current technological developments, Artificial Intelligence is only as smart as the information it is fed. If the AI algorithm is provided data that is of an unacceptable quality or contains bias, then any decisions that this algorithm makes will be significantly parti pris.

Solutions to Algorithmic Bias

In order to solve the consequential issue of algorithmic bias, we must ensure that any data provided to an algorithm is balanced and contains no pre-existing bias – this ensures that the algorithm can work effectively AND accurately.

If the data used to train an AI model is fair (this can be done by increasing the scrutiny of the data), then we can hope that the results that the model outputs will also be as fair.

Solution to the algorithmic bias in the EXAMPLE above^

In order to make the algorithm above more fair and less biased, we must ensure that the training data is balanced.

Before we had 15 images samples of the Lamborghinis and 2 image samples for the Range Rovers; hence we should now have equal numbers of training data before we train the model.

Press the Upload button for the Range Rover Class and upload 10 image samples for this class. (make sure that the Lamborghini class also has 10 image samples by removing any 5 images)

Now, we can Train the Model once again and now when we test the data, the algorithm should be less parti pris and more systematic and logical!

We have created a Machine Learning model to distinguish between Lamborghinis and Range rovers, and we have removed some forms of Algorithmic bias.

It is important to note that it is close to impossible to have a dataset with no algorithmic bias at all (therefore, above the algorithm did not get all of the test data 100% correct, but with more training data, it will hopefully become more accurate over time).

But the first step is to mitigate the bias in humans as fast as possible, and then, we can focus on making the ideal algorithms…

Conclusion

Thank you for reading, and please do leave a comment below (or any questions you may have). Please feel free to share this article with anyone who might find this interesting.

Quote

I would like to finish this article on the quote below, which demonstrates that Artificial Intelligence bases its decisions upon the training data that humans provide. Thus, when we say “Algorithmic Bias”, it is not necessarily the algorithm’s fault, because it is not an omniscient, sentient living entity which understands the “good” and “bad” in the world around us. Perhaps we, as humans, are the cause of this bias – due to the pre-existing social and cultural ‘parti pris’ that has been established in many societies.

AI is good at describing the world as it is today with all of its biases, but it does not know how the world should be

Joanne Chen

Please feel free to leave any comments you may have below. Thank you!

I would appreciate it if you could subscribe to my YouTube channel to support its growth!

Very articulate and informative.

AI denotes Artificial Intelligence. Just as natural food is healthy and artificial colours and supplements are not wholesome, it begs the question whether Intelligence which is artificial can ever be intelligent!!! Nonetheless, Intelligence which can be processed much faster, quicker and more accurately than human Intelligence certainly has scope for a plethora of beneficial applications. Then there is the next quantum leap into Artificial Wisdom in the future which perhaps has much wiser applications 🙂

As a lay man gist of the article is that data should be well balanced to feed in machine so as to get unbiased result. Am I right in my understanding. Please let me know.

Thank you for your comment Mr. J V Shah – you are able to summarise my article in a succinct yet pertinent manner. The fundamental principle is that the data should be made as balanced and impartial as possible, in order to lead to a more successful model.

Thank you for your comments Raj! Indeed, AI is used as a tool to support humans due to its efficiency and speed and the next step is to explore whether AI will ever have consciousness and become self-aware.

Presented to a very high standard, with a great example to illustrate the effects of algorithmic bias.

Great job and effort; “garbage in, garbage out” was the old adage. For the pictorial data set I am assuming AI draws its outcome from pattern recognition. Equal numbers of Lamborghini and Range Rover improved the outcome. I assume large numbers ( hundreds of vehicles in equal numbers may yield even greater precision.)

In medicine AI has been very helpful and good in radiology and dermatology. In thousands of X-rays showing tumor lesions , AI can predict a lesion to be cancerous even better than radiologists. Similarly in dermatology with thousands of pictures of skin moles, AI can more accurately predict suspicious skin moles better than dermatologists. AI is very good at pattern recognition, but is very poor with puzzles of reaching clinical diagnosis which requires imagination in addition to the experience of observing patients holistically – the demeanor, the gestures, the varied expressions of symptoms etc

Yuval Harari’s book Sapiens published in 2011 may be outdated now but was my first introduction to the promises and pitfalls of AI

Wishing you the best in expanding your blog

Thank you very much for your kind and thoughtful comments 😃

I find your comparison between AI and healthcare insightful, because I most certainly agree that Artificial Intelligence can be used as a tool to help solve real-world issues. Artificial Intelligence definitely has a vast potential in this field since the powerful processing of computers enables AI to create more efficient and accurate solutions to enhance our lives. In this case, AI is able to help predict the skin moles, because it is able to learn from huge amounts of training data and observe patterns in the skin which it can use to make a diagnosis.

It brings into question whether AI may even replace dermatologists one day in the future… or will AI always remain a tool to support humans in their quest to find the most majestic solution to a problem.